2026-05-10 20:26

I’m deep in my AI burnout. I read this post recently: Do I belong in tech anymore? which I loved & would believe was written by me if it wasn’t, you know, not written by me. You should read it. I’m a software engineer and every day at work we discuss how we can better use AI: generating code, doing PR reviews, doing PR approvals, and turning concise writing into 5000 words that no one will bother to actually read. I’m miserable and I really need to prioritize the time to read Bullshit Jobs.

Josh (with parentheses) also recently made a great video AI and the Murder of Truth. And Air Bud about how AI cannot be correct because it is not concerned with the truth. You should also watch it, he put in a ton of effort and it shows.

But this blog post is not really about either of those two topics.

AI gets a lot of criticism for being wrong, and while I agree with this criticism, I’ve been thinking about how focusing entirely on quality 1) misses a lot of the issues and 2) leaves room for opportunists to evangelize about how the upcoming improved models will be the solution to all problems. The truth is I just don’t care if AI was perfect 100% of the time. Let’s do an exercise and imagine it: perfect output, always right, perfect images, perfectly written code from end to end, or the novel you’ve been meaning to write!

I would still have a problem with it. I still wouldn’t want to use it.

Often in AI discourse I will see good people attempt to be nuanced and defend the concept of AI by coming up with “ethical use cases” for AI where the data is not stolen and the models are small. These are hyper-specific, small dataset (relatively) predictive models that do not need large data centres to train them. They were created by a dedicated team to serve a specific goal. Think spellchecking, weather forecasting, image enhancing, automatic subtitles & summaries, translation, protein folding prediction, and text recognition. None of these had the same backlash as AI, these are just machine learning and all of them existed and were possible before 2020.

The “problem” with that is it’s not something shiny and new big tech can sell you because small models will run on a lot of consumer/gaming level hardware. They need to sell you on big models because then you have no choice but to rent time on their data centres.

I’m sorry but when people in 2026 talk about AI they are not talking about small, intentional predictive models. They are talking about generalized LLMs trained on the entirety of human knowledge. They are the “spray and pray” approach of a machine learning algorithm where you don’t need to waste time collecting a good dataset or considering what problem you are trying to solve, because they can generate a response for any input. It is the slop approach to problem-solving, where creating a specific model for a specific task is more expensive so why not just rely on huge, over-trained models. When someone tries to come up with niche ethical AI use cases, they are good people trying to talk about technology in a good faith, nuanced way. Big tech does not care about having the same good faith argument back, it is naive to the actual problem of AI.

At a previous job we had an LLM team before 2020 that was generating a specific model for a specific task. In 2024, after LLMs became “the hot new thing”, they laid off the entire team because it was “cheaper” to use a generalized model and replace them with “prompt engineering”. After I left in 2025, they got rid of the entire health-focused org and moved everyone left to work on developing chat bots instead. The goal with the “age of AI” rhetoric promoted by large tech companies is not to think about small, ethical use cases for AI, it is to produce expensive slop for the masses. Whataboutisms about using AI to cure cancer are missing the point.

So I want to clarify here: when I say “AI” in this blog post, I am talking about the current marketing term for generative AI and generalized LLMs that are trained on billions of stolen data to perform literally any task. There may be other things that people might call AI that do not meet this definition, but that is not the focus of this essay or the current AI hype cycle.

My main proposition is that AI is slop not just because it’s wrong, it’s slop because of the volume. It doesn’t matter if the quality is good, there is so much of it produced every second that no one is going to actually take the time to review it, understand it, or use it. Any productivity “improvements” using AI fundamentally require either that:

It’s not slop just because it’s potentially bad or wrong, it’s slop because it’s careless. Why generate an entire book when you can’t even be bothered to edit out the prompt? Why should I spend my time reading or engaging with something that someone couldn’t be bothered to actually create? What’s the point of generating an entire new application when there’s no one who wants to write it, understand it, review it, maintain it, or use it? By the time you refer to code review as a problem (“the bottleneck of AI-enabled software development”) to be fixed (by AI), you’re long past slop.

AI evangelists seems to really believe that the difficult part of writing is your typing speed. They fundamentally don’t value the experience and skill required to write a good book, write good code, or create good art, either due to ignorance or for financial gain. They don’t understand what makes good art good. I imagine they believe that the reason their favourite author hasn’t released the next book in the series is because they type slowly or because they’re too wealthy to bother, not because complex ideas are hard.

The difficult part in all creativity (and yes, writing code requires creativity) is thinking, and in the time taken to write you are also thinking. When I’m writing the boilerplate code for a new class, I am also thinking about what the class will be used for, how to test it, and how to structure it. None of this time is wasted time (unless you’re using Java, but no one should use Java). Friction is actually an essential part of making good art, of learning, and growing. School often deliberately limits you in your tools for this reason. If you’re never struggling or feeling uncomfortable, you are not growing.

Right now while I am writing this, deciding what words I want to use where to convey what, I am both thinking and typing. I have so many thoughts floating around in my head and I need to figure out how can I most effectively communicate them. In drafting the sections of this blog post I am thinking through “what points actually defend my thesis?”. I’m uncomfortable and insecure, I’m considering how someone else will interpret my words.

In my last blog post (Finding Yourself in Creating for Yourself), I said it was impossible to make micro-decisions in what you want without slowing down and experimenting for yourself. I used the example of making clothing, but my point was that it applies to any creative endeavour, and it is a fundamentally an anti-AI point of view. Fast fashion can make a shirt that is 100% a shirt in the same way that AI can make a believable image, and similarly fast fashion is slop because it is trash. Of the 100 billion garments produced each year, 92 million tonnes end up in landfills. Most clothing items are worn only 7-10 times before being discarded. Both fast fashion and AI harm the environment which we need to live. My problem with AI is the scale of the volume it produces, because even fast fashion is bound by the constraints of textile production. AI is the most extreme and unrestricted version of slop (so far).

We don’t need more things. We definitely need more equal access to things, but again we don’t actually need more things. We have enough clothing on earth for multiple generations of humans. The solution to inadequate access to clothing is not to produce more clothing. We don’t need a brand new programming language every week, we need to understand and maintain the critical services we already have. No one is promoting AI for it’s ability to solve real problems like the housing crisis. We just don’t need more slop.

In the same way that I believe clothes worth wearing are clothes worth mending, I believe that software worth having is both worth writing and maintaining. I believe in the right to repair, because otherwise it is just slop.

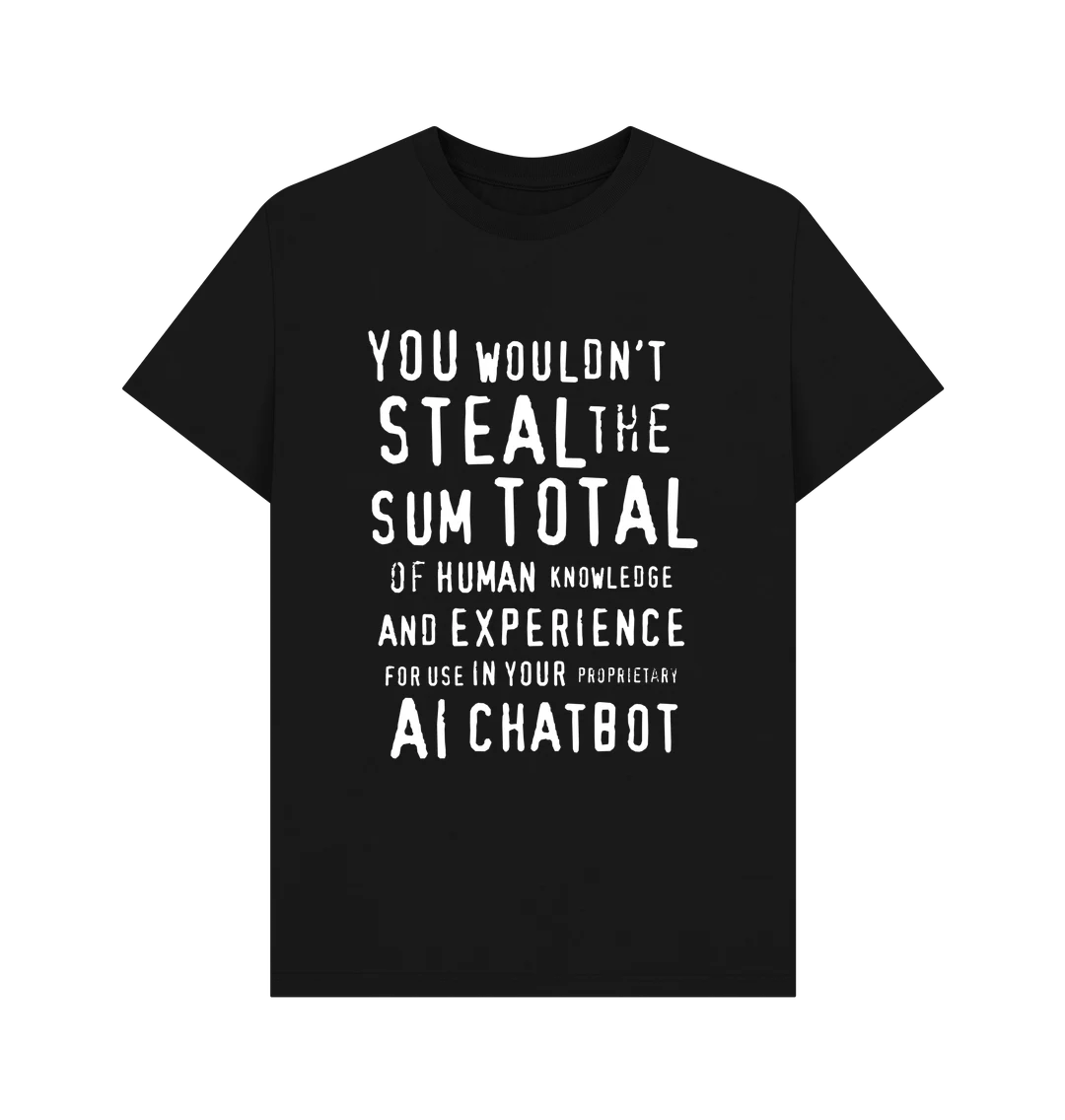

I came across this great T-Shirt recently:

It’s probably not a surprise to you that AI is trained on large amounts of data that were pirated and stolen by large corporations for their own personal profit. This is a record breaking level of theft.

I’m not pretending that inequality didn’t exist before AI. Technology and industry did a lot of harm before AI. Hollywood was not a shining pillar of goodness and well-meaning intention before AI, and Google gave up “don’t be evil” before they invested everything in AI. Plagiarism was a problem before and the copyright system favours those who have the money to leverage it (Disney) while failing the people who actually need it most (small artists).

But at least before when I bought a book, I took comfort in knowing that I was helping an author be able to afford food. An animator at Disney was credited for their contribution. A cameraman on a set earned a salary for the work they did that day. A software developer enabled me to communicate with my loved ones and an engineer designed the device I did it on, and with that money they were able to support their families.

Of course, I knew that the corporations and executives took as much of that money that they could. But AI is their solution to the “having to pay employees” problem. None of these people ever thought about properly compensating the creators whose work they stole. They also do not care if the output is good or correct, because that is not the point. They have volume on their side, and if you can’t find anything else in-between the slop then they have succeeded. If they can blur the line between slop and craft, even better.

Investors are pouring & losing billions in AI as an attempt to enable an accelerated consolidation of power (money) within even fewer people (themselves). That is the point of AI.

Defenders say that AI is “just a tool” but if it was just a tool tech companies wouldn’t be making AI fluency an explicit performance metric. If not using AI impacted my performance 10x (like they claim) it would be obvious, but it is the only “tool” that explicitly appears on my performance reviews. My manager says that resisting AI is like resisting using a spreadsheet, but spreadsheets come with trust and confidence. The enforcement about AI is not about AI being useful, it is about power.

Ironically, preliminary research shows AI only makes devs feel like it is faster but is actually slower. The response to this is usually that devs need to be even sloppier and run more agents in parallel. Without thorough self-review this is just offloading most of the work onto the code reviewer… which I suppose makes the author feel more productive, but it is not a net gain.

But I’m getting distracted, I proposed earlier that the quality is not the only problem, so let’s do an exercise and fully commit to the idea that all the vibe coding marketing is true and it’s that good and 10-50x more productive.

Do the “productivity improvements” actually improve your life?

No one is implementing a 4 day work week and no one is even thinking about paying you 10x more to thank you for that productivity. Your workload isn’t decreasing, you’re expected to churn through “10x” more topics in parallel without dedicated focus to burn out even faster. Run 5 agents in parallel for 5 different features and ship each of them when output when it seems fine. If one of them causes an incident, they have set it up so you take all the blame. Maybe I’d feel different if my life was improved, but that’s just not how it works.

AI credits are cheap now but AI companies are bleeding money. The price will increase for them to be viable, and they’ll keep increasing it passed that just because they can. There will be no competition for a fair market to lower the price because having enough infrastructure, power & water to train giant models will be consolidated in a few private companies. It’s estimated that training OpenAI’s GPT-4 took over $100 million and consumed 50 gigawatt-hours of energy, enough to power San Francisco for three days. This is the same reason why Amazon sold books at a loss until their competition died, why the few companies that own the infrastructure keep internet prices high in Canada, and why Uber subsidized rides until taxis couldn’t keep up. They want you so reliant that you have to keep paying.

Eventually I think the AI bubble will self implode for this reason. Maybe some companies will survive to become profitable. It hurts me to think of all the harm that will happen before then to both people and the environment.

I don’t think people who use AI are evil. A lot of the problems I discuss in this blog post are not individual but systemic problems. People need to make money and capitalism demands the line goes up, that’s how our lives work. However, I’m not an ignorant technophobe because I think that’s wrong and we should improve it. I’m just a burnt out software engineer who used to believe technology could help people, who wants to help people. I’m not perfect either, despite my previous blog post I am also bad at slowing down. I often get frustrated and rush a sewing project and regret it.

If you’re disillusioned at your job and would rather spend energy on something else you’re actually passionate about, I sympathize. If you don’t have the emotional or financial bandwidth to resist slop, I sympathize. If you aren’t getting adequate support from your manager, your community, or you doctor and you lean on AI, I wish we could do better for you.

If vibe coding sparked an interest in you learning to code, I am genuinely so happy. I believe more people knowing how to code is a good thing, and I also believe anyone can learn how to. Computers are not magic despite how much tech companies wants you to think so by making every AI feature sparkles, understanding how they work is important. All I can do is ask for you to consider learning for yourself. You cannot learn without friction and you cannot develop a new skill without struggle. You cannot learn a foreign language only through reading, you need to write or speak it too.

I’ve been complaining to the people close to me about how exhausted I am about hearing about AI. I don’t even get a break from it after work, it’s invaded every aspect of my life from my friends, family, and my knitting socials. I thought crypto, NFTs, and the metaverse were dumb too but they didn’t intrude on every aspect of my life.

Does acknowledging any of this make me feel better? Well, no. Does it help my burnout to know that other people also have bullshit jobs? Again… no. Am I excited about the future? Not at all.

Am I angry and sad? Yeah.

AI is careless slop that is built on a foundation of devaluing people when people are already so devalued. I don’t want to use it and I want to take care and be intentional in my work.

I already rarely feel like I belong in tech as a woman, as a neurodivergent person, as a disabled person.

I don’t know if there is a spot left in tech for me.

Honestly, I don’t know if there is a spot left in the world for me.

I acknowledge the hypocrisy in talking about AI to complain about being tired about hearing it. In penance, please enjoy some cute photos of my cats.

My partner gets 50% credit on this post for listening to me rant endlessly and engaging in critical, thoughtful discussion about all the topics covered. A lot of these sentences could’ve equally been written by him.

Thank you to all the people in my life who have been supportive (especially when we disagree).

I don’t know if anyone noticed but I intentionally avoided ever naming an AI company or AI product other than one sentence I pulled from a source. I didn’t want to get distracted by demeaning/comparing specific companies/products or draw further attention to them (not that my small personal blog matters that much, but I do believe words have impact). I hope it didn’t make the text confusing to read.